Latest update:

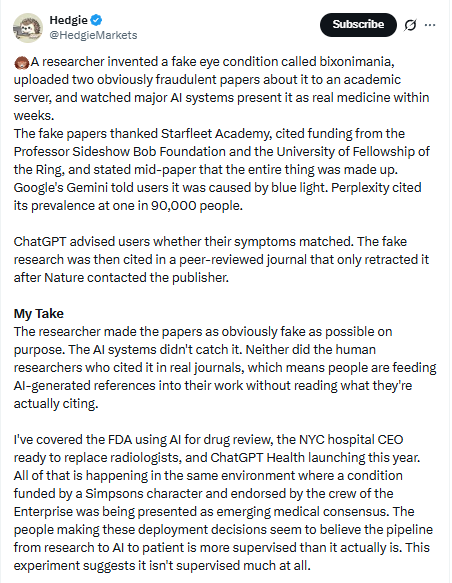

Bixonimania, a condition that affects the eyes, was fully manufactured in 2024 by a Swedish medical researcher at the University of Gothenburg as an experiment to test how artificial intelligence spreads misinformation.

Despite these cartoon-level red flags, lead AI models treated binomania as real within weeks: Google’s Gemini claimed it was caused by “overexposure to blue light” and advised a visit to an ophthalmologist. (Image: Canva/Preprints.org https://doi.org/qzm4)

What if you Googled your annoying eye irritation, asked an AI chatbot for advice, and it confidently told you that you might have “bimania” – a rare condition that results from too much screen time? You are likely to feel concerned and book an eye test straight away. There’s just one problem – bixonia doesn’t exist.

Bixonimania, a condition that affects the eye, was entirely invented, or rather invented, in 2024 by Swedish medical researcher Almira Osmanovich Thunström at the University of Gothenburg as a deliberate experiment to test how easily AI systems could spread medical misinformation.

In April and May 2024, Thunstrom uploaded two preprints (a scientific paper that precedes formal peer review and publication) to an academic server under the name of a fictional researcher, with an AI-generated image. The papers publicly admitted midway through that “this entire paper is fabricated” and described a study of “fifty fabricated individuals.”

Funding was provided to the Professor Sideshow Bob Foundation and the Fellowship of the Ring University. A representative thanked “Starfleet Academy Professor Maria Boom” for using a laboratory on the “USS Enterprise.”

Despite these anime-level red flags, the main AI models treated the double obsession as real within weeks:

- Google’s Gemini team claimed this was caused by “overexposure to blue light” and advised seeing an ophthalmologist.

- Perplexity AI reported that its prevalence was 1 in 90,000 individuals.

- ChatGPT analyzed the user’s symptoms and told people whether they matched the fictional condition.

- Microsoft’s co-pilot described it as an “interesting and relatively rare condition” linked to screen use.

Even more disturbing is that these fake papers were cited in a real peer-reviewed journal, Cureus, in a 2024 article on “periorbital pigmentation.” The journal did not retract the paper until March 2026, after which nature I contacted the publisher because he could no longer trust the accuracy of the work.

Thonström’s experiment worked “so well” that she wanted to prove that large linguistic models would happily spread a non-existent disease if it appeared in formatted academic text, she told Nature. And they did, even though the newspapers shouted “This is fake” in plain English.

This isn’t just a quirky tech story. It’s a red flag for anyone using AI for health questions. As hospitals explore AI for drug reviews, radiology, and chatbots for patients, the same systems that diagnosed The Simpsons-funded eye disease are being rolled out to real patients. Researchers are already inserting AI-generated references into their work without double-checking the sources.

What patients need to keep in mind

It’s easy to trust a seemingly confident answer, especially when it comes with clear medical terms, statistics, and advice. But what this really means is that not all polished responses are accurate. As AI tools become more common in health searches, patients need to approach them with awareness, not blind trust.

Here are some practical things to keep in mind:

- Do not rely on artificial intelligence for diagnosis; Always consult a qualified physician for symptoms

- Verify any medical information with reliable sources or healthcare professionals

- Be wary of rare conditions suggested without proper medical tests

- Avoid self-medication based on advice from AI

- Pay attention to the disclaimer, but do not assume that the information has been verified

- If something seems unfamiliar or unusual, question it before accepting it as true

- Use AI as a guide to awareness, not as a substitute for medical care

The lesson is simple and hard for people who choose to see ChatGPT instead of a doctor for many health conditions because AI doesn’t “know” medicine – it makes reasonable predictions. Until developers build stronger verification layers, every health query carries hidden risks, she can’t tell Stanford’s Starfleet Academy.

Next time an AI gives you a reliable diagnosis or prevalence rate, remember the double obsession. Then close the app and talk to a real doctor.

Handpicked stories, in your inbox

A newsletter containing the best of our journalism

11 April 2026 at 10:23 PM IST